News

A novel form of an Alzheimer’s protein found in the fluid that surrounds the brain and spinal cord indicates what stage of the disease a person is in, and tracks with tangles of tau protein in the brain, according to a study from researchers at Washington University School of Medicine in St. Louis. Tau tangles are thought to be toxic to neurons, and their spread through the brain foretells the death of brain tissue and cognitive decline. Tangles appear as the early, asymptomatic stage of Alzheimer’s develops into the symptomatic stage.

The discovery of so-called microtubule binding region tau (MTBR tau) in the cerebrospinal fluid could lead to a way to diagnose people in the earliest stages of Alzheimer’s disease, before they have symptoms or when their symptoms are still mild and easily misdiagnosed. It also could accelerate efforts to find treatments for the devastating disease, by providing a relatively simple way to gauge whether an experimental treatment slows or stops the spread of toxic tangles.

The study is published Dec. 7 in the journal Brain.

“This MTBR tau fluid biomarker measures tau that makes up tangles and can confirm the stage of Alzheimer’s disease by indicating how much tau pathology is in the brains of Alzheimer’s disease patients,” said senior author Randall J. Bateman, MD, the Charles F. and Joanne Knight Distinguished Professor of Neurology. Bateman treats patients with Alzheimer’s disease on the Washington University Medical Campus. “If we can translate this into the clinic, we’d have a way of knowing whether a person’s symptoms are due to tau pathology in Alzheimer’s disease and where they are in the disease course, without needing to do a brain scan. As a physician, this information is invaluable in informing patient care, and in the future, to guide treatment decisions.”

Alzheimer’s begins when a brain protein called amyloid starts forming plaques in the brain. During this amyloid stage, which can last two decades or more, people show no signs of cognitive decline. However, soon after tangles of tau begin to spread in the neurons, people start exhibiting confusion and memory loss, and brain scans show increasing atrophy of brain tissue.

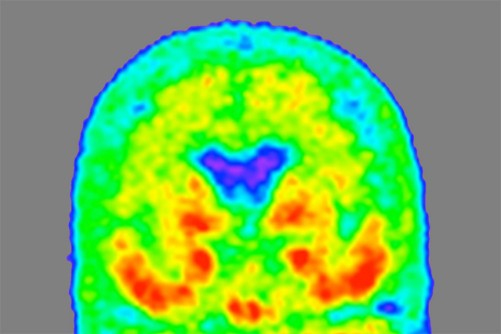

Tau tangles can be detected by positron emission tomography (PET) brain scans, but brain scans are time-consuming, expensive and not available everywhere. Bateman and colleagues are developing diagnostic blood tests for Alzheimer’s disease based on amyloid or a different form of tau, but neither test can pin down the amount of tau tangles across the stages of disease.

MTBR tau is an insoluble piece of the tau protein, and the primary component of tau tangles. Bateman and first author Kanta Horie, PhD, a visiting scientist in Bateman’s lab, realized that specific MTBR tau species were enriched in the brains of people with Alzheimer’s disease, and that measuring levels of the species in the cerebrospinal fluid that bathes the brain might be a way to gauge how broadly the toxic tangles have spread through the brain. Previous researchers using antibodies against tau had failed to detect MTBR tau in the cerebrospinal fluid. But Horie and colleagues developed a new method based on using chemicals to purify tau out of a solution, followed by mass spectrometry.

Using this technique, Horie, Bateman and colleagues analyzed cerebrospinal fluid from 100 people in their 70s. Thirty had no cognitive impairment and no signs of Alzheimer’s; 58 had amyloid plaques with no cognitive symptoms, or with mild or moderate Alzheimer’s dementia; and 12 had cognitive impairment caused by other conditions. The researchers found that levels of a specific form — MTBR tau 243 — in the cerebrospinal fluid were elevated in the people with Alzheimer’s and that it increased the more advanced a person’s cognitive impairment and dementia were.

The researchers verified their results by following 28 members of the original group over two to nine years. Half of the participants had some degree of Alzheimer’s at the start of the study. Over time, levels of MTBR tau 243 significantly increased in the Alzheimer’s disease group, in step with a worsening of scores on tests of cognitive function.

The gold standard for measuring tau in the living brain is a tau-PET brain scan. The amount of tau visible in a brain scan correlates with cognitive impairment. To see how their technique matched up to the gold standard, the researchers compared the amount of tau visible in brain scans of 35 people — 20 with Alzheimer’s and 15 without — with levels of MTBR tau 243 in the cerebrospinal fluid. MTBR tau 243 levels were highly correlated with the amount of tau identified in the brain scan, suggesting that their technique accurately measured how much tau — and therefore damage — had accumulated in the brain.

“Right now there is no biomarker that directly reflects brain tau pathology in cerebrospinal fluid or the blood,” Horie said. “What we’ve found here is that a novel form of tau, MTBR tau 243, increases continuously as tau pathology progresses. This could be a way for us to not only diagnose Alzheimer’s disease but tell where people are in the disease. We also found some specific MTBR tau species in the space between neurons in the brain, which suggests that they may be involved in spreading tau tangles from one neuron to another. That finding opens up new windows for novel therapeutics for Alzheimer’s disease based on targeting MTBR tau to stop the spread of tangles.”

Photo Credit:

Tammie Benzinger/Knight ADRC

A “heat map” of the brain of a person with mild Alzheimer’s dementia shows where tau protein has accumulated, with areas of higher density in red and orange, and lower density in green and blue. Researchers at Washington University School of Medicine in St. Louis have found a form of tau in spinal fluid that tracks with tau tangles in the brain and indicates what stage of the disease a person is in.

Newswise — BINGHAMTON, NY -- Bacterial infections have become one of the biggest health problems worldwide, and a recent study shows that COVID-19 patients have a much greater chance of acquiring secondary bacterial infections, which significantly increases the mortality rate.

Combatting the infections is no easy task, though. When antibiotics are carelessly and excessively prescribed, that leads to the rapid emergence and spread of antibiotic-resistant genes in bacteria — creating an even larger problem. According to the Centers for Disease Control and Prevention, 2.8 million antibiotic-resistant infections happen in the U.S. each year, and more than 35,000 people die from of them.

One factor slowing down the fight against antibiotic-resistant bacteria is the amount of time needed to test for it. The conventional method uses extracted bacteria from a patient and compares lab cultures grown with and without antibiotics, but results can take one to two days, increasing the mortality rate, the length of hospital stay and overall cost of care.

Associate Professor Seokheun “Sean” Choi — a faculty member in the Department of Electrical and Computer Engineering at Binghamton University’s Thomas J. Watson College of Engineering and Applied Science — is researching a faster way to test bacteria for antibiotic resistance.

“To effectively treat the infections, we need to select the right antibiotics with the exact dose for the appropriate duration,” he said. “There’s a need to develop an antibiotic-susceptibility testing method and offer effective guidelines to treat these infections.”

In the past few years, Choi has developed several projects that cross “papertronics” with biology, such as one that developed biobatteries using human sweat.

This new research — titled “A simple, inexpensive, and rapid method to assess antibiotic effectiveness against exoelectrogenic bacteria” and published in November’s issue of the journal Biosensors and Bioelectronics — relies on the same principles as the batteries: Bacterial electron transfer, a chemical process that certain microorganisms use for growth, overall cell maintenance and information exchange with surrounding microorganisms.

“We leverage this biochemical event for a new technique to assess the antibiotic effectiveness against bacteria without monitoring the whole bacterial growth,” Choi said. “As far as I know, we are the first to demonstrate this technique in a rapid and high-throughput manner by using paper as a substrate.”

Working with PhD students Yang Gao (who earned his degree in May and is now working as a postdoctoral researcher at the University of Texas at Austin), Jihyun Ryu and Lin Liu, Choi developed a testing device that continuously monitors bacteria’s extracellular electron transfer.

A medical team would extract a sample from a patient, inoculate the bacteria with various antibiotics over a few hours and then measure the electron transfer rate. A lower rate would mean that the antibiotics are working.

“The hypothesis is that the antiviral exposure could cause sufficient inhibition to the bacterial electron transfer, so the readout by the device would be sensitive enough to show small variations in the electrical output caused by changes in antibiotic effectiveness,” Choi said.

The device could provide results about antibiotic resistance in just five hours, which would serve as an important point-of-care diagnostic tool, especially in areas with limited resources.

The prototype — built in part with funding from the National Science Foundation and the U.S. Office of Naval Research — has eight sensors printed on its paper surface, but that could be extended to 64 or 96 sensors if medical professionals wanted to build other tests into the device.

Building on this research, Choi already knows where he and his students would like to go next: “Although many bacteria are energy-producing, some pathogens do not perform extracellular electron transfer and may not be used directly in our platform. However, various chemical compounds can assist the electron transfer from non-electricity-producing bacteria.

“For instance, E. coli cannot transfer electrons from the inside of the cell to the outside, but with the addition of some chemical compounds, they can generate electricity. Now we are working on how to make this technique general to all bacteria cells.”

When the protein TRPC1 is exposed to weak magnetic fields, it stimulates muscle cells to respond as if the body has exercised

Newswise — As people age, they progressively lose muscle mass and strength, and this can lead to frailty and other age-related diseases. As the causes for the decline remain largely unknown, promoting muscle health is an area of great research interest. A recent study led by the researchers from the National University of Singapore (NUS) has shown how a molecule found in muscles responds to weak magnetic fields to promote muscle health.

Led by Associate Professor Alfredo Franco-Obregón from the NUS Institute for Health Innovation and Technology (iHealthtech), the team found that a protein known as TRPC1 responds to weak oscillating magnetic fields. Such a response is normally activated when the body exercises. This responsiveness to magnets could be used to stimulate muscle recovery, which could improve the life quality for patients with impaired mobility, in an increasingly ageing society.

“The use of pulsed magnetic fields to simulate some of the effects of exercise will greatly benefit patients with muscle injury, stroke, and frailty as a result of advanced age,” said lead researcher Assoc Prof Franco-Obregón, who is also from the NUS Department of Surgery.

The NUS research team collaborated with the Swiss Federal Institute of Technology (ETH) on this study, and their results were first published online in Advanced Biosystems on 2 September 2020. The work was also featured on the cover of the journal’s print edition on 27 November 2020.

Magnets and muscle health

The magnetic fields that the research team used to stimulate the muscle health were only 10 to 15 times stronger than the Earth’s magnetic field, yet still much weaker than a common bar magnet, raising the intriguing possibility that weak magnetism is a stimulus that muscles naturally interact with.

To test this theory, the research team first used a special experimental setup to cancel the effect of all surrounding magnetic fields. The researchers found that the muscle cells indeed grew more slowly when shielded from all environmental magnetic fields. These observations strongly supported the notion that the Earth’s magnetic field naturally interacts with muscles to elicit biological responses.

To show the involvement of TRPC1 as an antenna for natural magnetism to promote muscle health, the researchers genetically engineered mutant muscle cells that were unresponsive to any magnetic field by deleting TRPC1 from their genomes. The researchers were then able to reinstate magnetic sensitivity by selectively delivering TRPC1 to these mutant muscle cells in small vesicles that fused with the mutant cells.

In their previous studies, the researchers have shown that response to such magnetic fields were strongly correlated to the presence of TRPC1, and it included the rejuvenation of cartilage by indirectly regulating the gut microbiome, fat burning and insulin-sensitivity via positive actions on muscle. The present study provided conclusive evidence that TRPC1 serves as an ubiquitous biological antenna to surrounding magnetic fields to modulate human physiology, particularly when targeted for muscle health.

Metabolic changes similar to those achieved with exercise have been observed in previous clinical trials and studies led by Assoc Prof Franco-Obregón. Encouraging benefits of using the magnetic fields to stimulate muscle cells have been found, with as little as 10 minutes of exposure per week. This tantalising possibility, to improve muscle health without exercising, could facilitate recovering and rehabilitation of patients with muscle dysfunction.

Assoc Prof Franco-Obregón shared, “About 40 per cent of an average person’s body is muscle. Our results demonstrate a metabolic interaction between muscle and magnetism which hopefully can be exploited to improve human health and longevity.”

Next steps

This study represents a milestone in the understanding of how a key protein may developmentally react to magnetic fields.

Metabolic health such as weight, blood sugar levels, insulin, and cholesterol are strongly influenced by muscle health. As exercise is a strong modulator of metabolic diseases through the working of the muscles, and magnetic fields exert similar benefits of exercise, such magnetism may help patients who are unable to undertake exercise because of injury, disease, or frailty. As such, the NUS iHealthtech research team is now working to extend their study to reduce drug dependence for the treatment of diseases such as diabetes.

“We hope that our research can help alleviate side effects by reducing the use of drugs for disease treatment, and to improve the quality of life of the patients,” said Assoc Prof Franco-Obregón.

This project has recently won the Catalyst Award in the inaugural Healthy Longevity Catalyst Awards conferred by the US National Academy of Medicine. The team was recognised for their breakthrough innovation to extend human health and function later in life.

Photo Credit:

National University of Singapore

Associate Professor Alfredo Franco-Obregón and his team from the NUS Institute for Health Innovation and Technology examined how low amplitude magnetic fields may be used to enhance muscle metabolism. The images on the screen show the cells of two types of muscles - the blue fibres (left) are rapidly fatiguing muscles, the green fibres (right) are slowly fatiguing muscle, and the red fibres are considered transitional fibres.

Researchers conclude that regularly speaking two languages contributes to cognitive reserve and delays the onset of the symptoms associated with cognitive decline and dementia.

Newswise — In addition to enabling us to communicate with others, languages are our instrument for conveying our thoughts, identity, knowledge, and how we see and understand the world. Having a command of more than one enriches us and offers a doorway to other cultures, as discovered by a team of researchers led by scientists at the Open University of Catalonia (UOC) and Pompeu Fabra University (UPF). Using languages actively provides neurological benefits and protects us against cognitive decline associated with ageing.

In a study published in the journal Neuropsychologia, the researchers conclude that regularly speaking two languages -and having done so throughout one's life- contributes to cognitive reserve and delays the onset of the symptoms associated with cognitive decline and dementia.

"We have seen that the prevalence of dementia in countries where more than one language is spoken is 50% lower than in regions where the population uses only language to communicate", asserts researcher Marco Calabria, a member of the Speech Production and Bilingualism research group at UPF and of the Cognitive NeuroLab at the UOC, and professor of Health Sciences Studies, also at the UOC.

Previous work had already found that the use of two or more languages throughout life could be a key factor in increasing cognitive reserve and delaying the onset of dementia; also, that it entailed advantages of memory and executive functions.

"We wanted to find out about the mechanism whereby bilingualism contributes to cognitive reserve with regard to mild cognitive impairment and Alzheimer's, and if there were differences regarding the benefit it confers between the varying degrees of bilingualism, not only between monolingual and bilingual speakers", points out Calabria, who led the study.

Thus, and unlike other studies, the researchers defined a scale of bilingualism: from people who speak one language but are exposed, passively, to another, to individuals who have an excellent command of both and use them interchangeably in their daily lives. To construct this scale, they took several variables into account such as the age of acquisition of the second language, the use made of each, or whether they were used alternatively in the same context, among others.

The researchers focused on the population of Barcelona, where there is strong variability in the use of Catalan and Spanish, with some districts that are predominantly Catalan-speaking and others where Spanish is mainly spoken. "We wanted to make use of this variability and, instead of comparing monolingual and bilingual speakers, we looked at whether within Barcelona, where everyone is bilingual to varying degrees, there was a degree of bilingualism that presented neuroprotective benefits", Calabria explains.

Bilingualism and Alzheimer's

At four hospitals in the Barcelona and metropolitan area, they recruited 63 healthy individuals, 135 patients with mild cognitive impairment, such as memory loss, and 68 people with Alzheimer's, the most prevalent form of dementia. They recorded their proficiency in Catalan and Spanish using a questionnaire and established the degree of bilingualism of each subject. They then correlated this degree with the age at which the subjects' neurological diagnosis was made and the onset of symptoms.

To better understand the origin of the cognitive advantage, they asked the participants to perform various cognitive tasks, focusing primarily on the executive control system, since the previous studies had suggested that this was the source of the advantage. In all, participants performed five tasks over two sessions, including memory and cognitive control tests.

"We saw that people with a higher degree of bilingualism were given a diagnosis of mild cognitive impairment later than people who were passively bilingual", states Calabria, for whom, probably, speaking two languages and often changing from one to the other is life-long brain training. According to the researcher, this linguistic gymnastics is related to other cognitive functions such as executive control, which is triggered when we perform several actions simultaneously, such as when driving, to help filter relevant information.

The brain's executive control system is related with the control system of the two languages: it must alternate them, make the brain focus on one and then on the other so as not to cause one language to intrude in the other when speaking.

"This system, in the context of neurodegenerative diseases, might offset the symptoms. So, when something does not work properly as a result of the disease, the brain has efficient alternative systems to solve it thanks to being bilingual", Calabria states, who then continues: "we have seen that the more you use two languages and the better language skills you have, the greater the neuroprotective advantage. Active bilingualism is, in fact, an important predictor of the delay in the onset of the symptoms of mild cognitive impairment, a preclinical phase of Alzheimer's disease, because it contributes to cognitive reserve".

Now, the researchers wish to verify whether bilingualism is also beneficial for other diseases, such as Parkinson's or Huntington's disease.

Newswise — DALLAS, November 16, 2020 -- Adults with the healthiest sleep patterns had a 42% lower risk of heart failure regardless of other risk factors compared to adults with unhealthy sleep patterns, according to new research published today in the American Heart Association's flagship journal Circulation. Healthy sleep patterns are rising in the morning, sleeping 7-8 hours a day and having no frequent insomnia, snoring or excessive daytime sleepiness.

Heart failure affects more than 26 million people, and emerging evidence indicates sleep problems may play a role in the development of heart failure.

This observational study examined the relationship between healthy sleep patterns and heart failure and included data on 408,802 UK Biobank participants, ages 37 to 73 at the time of recruitment (2006-2010). Incidence of heart failure was collected until April 1, 2019. Researchers recorded 5,221 cases of heart failure during a median follow-up of 10 years.

Researchers analyzed sleep quality as well as overall sleep patterns. The measures of sleep quality included sleep duration, insomnia and snoring and other sleep-related features, such as whether the participant was an early bird or night owl and if they had any daytime sleepiness (likely to unintentionally doze off or fall asleep during the daytime).

"The healthy sleep score we created was based on the scoring of these five sleep behaviors," said Lu Qi, M.D., Ph.D., corresponding author and professor of epidemiology and director of the Obesity Research Center at Tulane University in New Orleans. "Our findings highlight the importance of improving overall sleep patterns to help prevent heart failure."

Sleep behaviors were collected through touchscreen questionnaires. Sleep duration was defined into three groups: short, or less than 7 hours a day; recommended, or 7 to 8 hours a day; and prolonged, or 9 hours or more a day.

After adjusting for diabetes, hypertension, medication use, genetic variations and other covariates, participants with the healthiest sleep pattern had a 42% reduction in the risk of heart failure compared to people with an unhealthy sleep pattern.

They also found the risk of heart failure was independently associated and:

8% lower in early risers;

12% lower in those who slept 7 to 8 hours daily;

17% lower in those who did not have frequent insomnia; and

34% lower in those reporting no daytime sleepiness.

Participant sleep behaviors were self-reported, and the information on changes in sleep behaviors during follow-up were not available. The researchers noted other unmeasured or unknown adjustments may have also influenced the findings.

Qi also noted that the study's strengths include its novelty, prospective study design and large sample size.

First-author is Xiang Li, Ph.D.; other co-authors are Qiaochu Xue, M.P.H.; Mengying Wang, M.P.H.; Tao Zhou, Ph.D.; Hao Ma, Ph.D.; and Yoriko Heianza, Ph.D. Author disclosures are detailed in the manuscript.

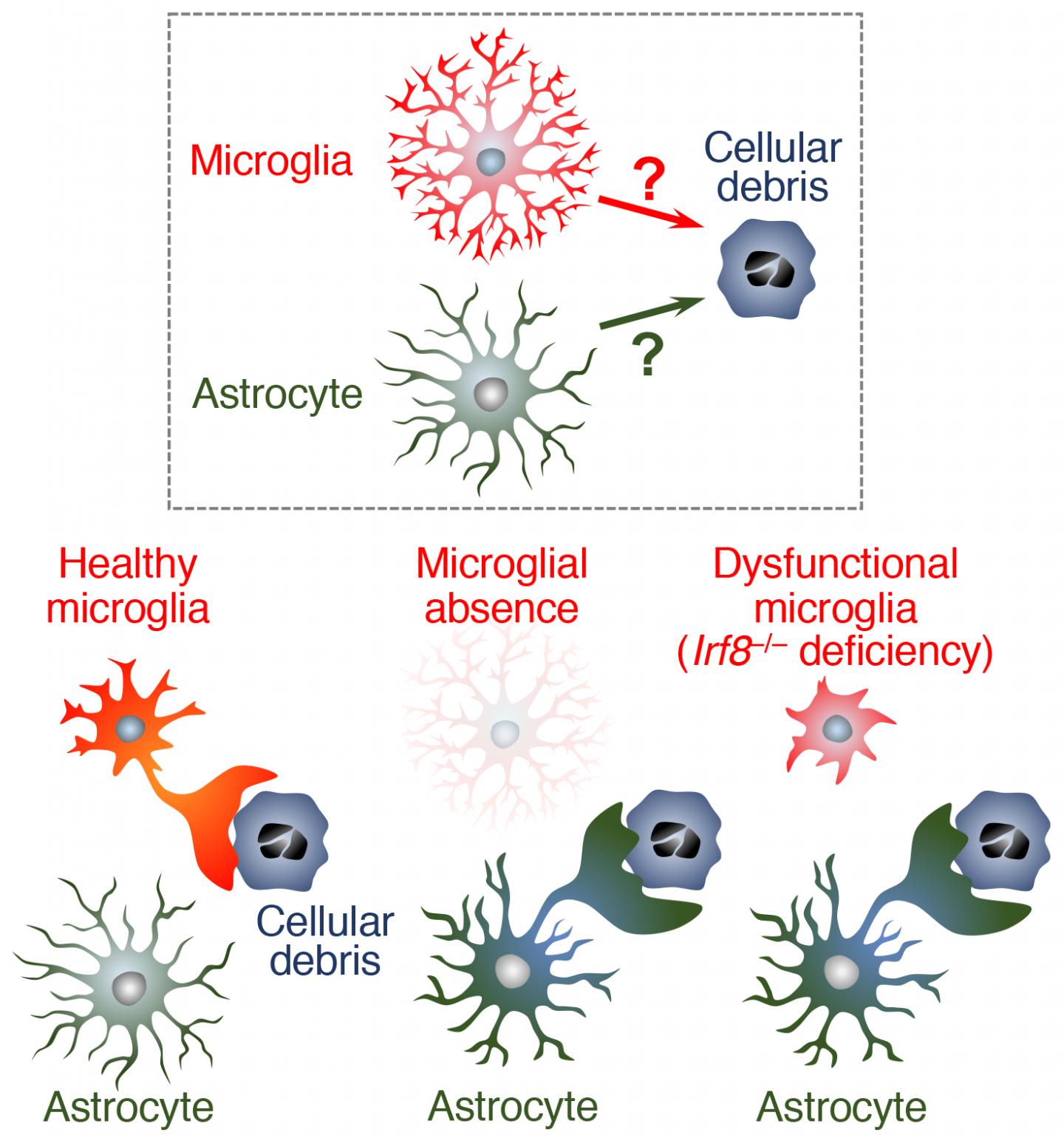

Newswise — Microglia -- the brain's immune cells -- play a primary role in removing cellular debris from the brain. According to a recent study by a Nagoya University-led research team in Japan, another kind of brain cell, called astrocyte, is also involved in removing debris as a backup to microglia. The finding, published recently in The EMBO Journal, could lead to new therapies that accelerate the removal of cellular debris from the brain and thereby reduce detrimental effects of the debris on surrounding cells.

Even in a healthy brain, neurons die at a certain rate, which increases with age. As dead cells and cellular debris accumulate, they harm surrounding cells, which in turn accelerates neuron death and causes neurodegenerative diseases such as Alzheimer's disease. Microglia -- brain "phagocytes" (a type of cell that engulfs and absorbs bacteria and cellular debris) -- act to clear the danger, but the debris sometimes overwhelms the microglia. This has led to suggestions that another mechanism that helps remove cellular debris is also at work.

To clarify the nature of the alternative debris-clearing mechanism, a research team led by Dr. Hiroshi Kiyama and Dr. Hiroyuki Konishi of the Graduate School of Medicine at Nagoya University first investigated what would happen to microglial debris in the brains of mouse models in which microglial death was induced. As expected, the team observed that dead microglia were cleared, indicating that indeed another phagocyte was at work.

The researchers next analyzed the expression of molecules in the brains of the mouse models and identified astrocytes that play a role in the removal of microglial debris. Then, using mutant mice with phagocytosis-impaired microglia, they examined how astrocytes work when microglia don't function properly. The results showed that almost half of the cellular debris was engulfed by astrocytes, not by microglia. This indicates that astrocytes have the potential to compensate for microglial dysfunction.

The team concluded that not only are astrocytes capable of engulfing cellular debris, but also that they are likely to actually do this when microglia don't function properly.

The team next plans to clarify how astrocytes recognize microglial dysfunction and deploy their phagocytic function. Drs. Kiyama and Konishi say, "Further investigation of how to control astrocytic phagocytosis may lead to new therapies that accelerate efficient debris clearance from aged or injured brains."

Photo credit:

Hiroyuki Konishi

Microglial ablation or microglial dysfunction actuates phagocytic activity of astrocytes. Astrocytes possess phagocytic machinery and have the potential to compensate for microglia with dysfunctional phagocytic activity.

Newswise — COVID-19 has significantly increased public use of face masks to protect others from the wearer’s respiratory droplets as well as the wearer from airborne contaminants. After each wear however, bacteria from even a healthy wearer’s own respiratory droplets collect on the inside of a mask as well as the outside, which could contain airborne pathogens capable of living on its surface. Although proper sanitization is imperative, many people reuse masks and other face coverings many times without sanitizing them. That is likely because current sanitization methods can be cumbersome.

To address the many pitfalls of sanitizing all types of face masks from N-95s to cloth and surgical masks, a scientist from Florida Atlantic University’s Schmidt College of Medicine has come up with an innovative solution. Patrick Grant, Ph.D., associate professor of biomedical science, has designed a compact and portable sanitizing device for masks and other items that can be used at home or at work.

The “portable hanging rack device” has been designed as an enclosed chamber that comes in two forms – a plastic container with a handle and a stainless steel compartment. The hanging rack and an ultraviolet-C (UV-C) light source is placed within either of these enclosed chambers and is capable of sterilizing up to six masks simultaneously and quickly, killing bacteria, yeasts, mold spores, and viruses. The masks are positioned vertically on the internal rack. To prevent the UV light from harming a user’s skin and eyes, the light source is shielded within the housing enclosure. When UV-C radiation contacts the mask, the surface of the mask is subsequently sanitized as the radiation deactivates the biological components of pathogens. The UV-C light source delivers uninterrupted UV-C radiation to the mask surfaces and uses a UV-C bulb that produces limited ozone.

Grant has tested a number of micro-organisms using this device and has shown its efficacy against pathogens including the highly-contagious E-coli, which was eradicated within the device in about one minute. FAU recently filed a provisional patent application for Grant’s novel invention with the United States Patent & Trademark Office.

“People only have access to a limited supply of masks and often don’t have the option of disposing of them after a single use,” said Grant. “Those who wear cloth masks may sanitize them by washing them, but the washing and drying process is often too time-consuming to sustain washing after each wear. Moreover, washing is not an option for those who wear medical-grade masks, and using disinfectant sprays can cause skin irritation or damage the fibers of the mask designed to catch particulates. That’s why I created this device as a time-efficient way to sanitize facial coverings without damaging their effectiveness and enable wearers to safely reuse them in their daily lives.”

Although there are commercially-available UV-C treatment devices, many of the devices permitting sanitization of multiple objects are of a commercial size that is too large for household use. Furthermore, these devices frequently utilize larger UV bulbs, which are not only costly, but also produce ozone, resulting in an unpleasant odor. In addition, current portable devices are not suitably adapted to sanitize the entire surface of multiple masks at once – a concern for families using multiple face coverings on a daily basis.

While handheld-UV wands exist, these wands increase exposure to UV rays and often require the user to hold the wand for a lengthy period of time to properly sanitize the desired surface, increasing the user’s risk of skin burns and damage to the corneas of the eyes. Studies also have shown users of hand-held devices are unable to hold the devices at the angle and for the length of time necessary to generate a stable UV directional output and effectively sanitize a surface. Moreover, open-air UV devices risk UV exposure to commonly found household surfaces such as plastics, which may damage the integrity and appearance of these materials.

When fully developed, this apparatus will effectively sanitize masks as well as other objects such as keys and smart phones in a way that is safe, affordable, odor-free, and suitable for household use. Grant anticipates the cost of the plastic portable container and the steel container to be under $100 and to provide cost savings from an extended life of masks and from potential savings from reducing preventable infections.

Photo Credit:

Patrick Grant, Ph.D., Florida Atlantic University

Video shows two versions of the “portable hanging rack device” and the researcher swabbing a face mask and placing it onto a Petri dish. The cover image is a side-by-side view of bacteria from a face mask before and after using the sanitization device. When UV-C radiation contacts the mask, the surface of the mask is subsequently sanitized as the radiation deactivates the biological components of pathogens.

Newswise — ARLINGTON, Va., October 21, 2020 — A new clinical guideline from the American Society for Radiation Oncology (ASTRO) provides guidance for physicians who use radiation therapy to treat patients with locally advanced rectal cancer. Recommendations outline indications and best practices for pelvic radiation treatments, as well as the integration of radiation with chemotherapy and surgery for stage II-III disease. The guideline, which replaces ASTRO's 2016 guidance for rectal cancer, is published in Practical Radiation Oncology.

Colorectal cancer is the second most common cause of cancer death in the U.S., and half of new colorectal cancer diagnoses are in people age 66 or younger. Rectal cancer diagnoses account for nearly one-third of colorectal cancers; an estimated 43,340 adults will be diagnosed with rectal cancer in 2020. While rectal cancer incidence and mortality rates have dropped among older adults in recent years, they have increased for those younger than age 55.

"As rectal cancer becomes more of a disease of younger adults, long-term survivorship and quality of life considerations become even more important. Part of our motivation was to create guidelines that provide options for different treatments that could potentially improve survival rates and also help preserve patients' quality of life," said Prajnan Das, MD, MPH, chair of the rectal guideline task force, and professor and chief of gastrointestinal radiation oncology at The University of Texas MD Anderson Cancer Center in Houston.

Standard treatment for locally advanced rectal cancer generally involves chemoradiation therapy or short-course radiation without chemotherapy, followed by tumor removal surgery and additional chemotherapy. More recently, several trials have shown potential for emerging paradigms, such as changing the sequencing of treatments or omitting portions of treatments for select patients.

"Different treatments are appropriate for different patients, and the oncology field at large is moving toward personalized care," explained Jennifer Y. Wo, MD, vice chair of the rectal guideline task force and associate professor of radiation oncology at Harvard Medical School and Massachusetts General Hospital in Boston. "Some patients may need less than what is considered a typical course of treatment, while some patients may need more. This guideline focuses on providing options that can be tailored to patients' characteristics and their wishes."

Recommendations in the guideline address patient selection for radiation therapy, delivery of pelvic radiation treatments, options for non-operative management of locally advanced rectal cancer and guidance for follow-up care. Key recommendations include:

Neoadjuvant radiation therapy is strongly recommended for patients with clinical stage II-III rectal cancer to reduce their risk of locoregional recurrence. Radiation therapy for locally advanced rectal cancer should be performed before rather than after surgery. Radiation may be omitted in favor of upfront surgery for some patients at low risk of recurrence, after discussion by a multidisciplinary care team. Clinical staging involving a physical exam and pelvic MRI is critical to determine which patients should receive neoadjuvant radiation therapy.

For patients who require neoadjuvant radiation therapy, both conventionally fractionated radiation and short-course radiation are recommended equally, given high-quality evidence for similar efficacy and patient-reported quality of life outcomes with each treatment. The guideline specifies optimal dosing, fractionation and delivery techniques for radiation therapy

Recommendations address how to incorporate chemotherapy into the pre-operative setting for patients who are at high risk of recurrence and who would likely benefit from the additional treatment using a total neoadjuvant therapy (TNT) approach. Recommendations also address other sequencing and timing issues for radiation, chemotherapy and surgery, with specific attention to treatment tolerability and potential downstaging.

Organ preservation approaches (i.e., non-operative management and local excision) may present an alternative to radical surgery for select patients, especially those who would have a permanent colostomy or inadequate bowel continence after surgery. The guideline outlines specific criteria for situations where surgery can be avoided, as well as long-term surveillance and care for these patients.

COVID-19 and Rectal Cancer

While the guideline was completed before the COVID-19 pandemic, recommendations can guide clinics as they continue to care for patients. To reduce how frequently patients needed to come into the clinic for treatment, many institutions across the country moved toward short-course radiation in the early months of the pandemic, which aligns with the guideline's recommendations.

"Patients usually complete short-course radiation therapy in one week, compared to five-and-a-half weeks for standard radiation treatment. That is particularly important in the COVID era, when you want to minimize patient time in the hospital and issues like financial toxicity are especially salient," said Dr. Das.

"We have yet to see the true impact of COVID-19, but we know that interruptions in screening likely will lead to fewer patients receiving treatment when their disease is more manageable," said Dr. Wo. "And if that does happen and we start seeing patients with more advanced disease, then the parts of the guidelines that specifically address treatment for high-risk patients will become even more important."

About the Guideline

The guideline was based on a systematic literature review of articles published from January 1999 through April 2019. The multidisciplinary task force included radiation, medical and surgical oncologists, a radiation oncology resident, medical physicists and a patient representative. The guideline was developed in collaboration with the American Society of Clinical Oncology and the Society of Surgical Oncology, and endorsed by the American College of Radiology, Canadian Association of Radiation Oncology, European Society for Radiotherapy and Oncology, the Royal Australian and New Zealand College of Radiologists and the Society of Surgical Oncology.

ASTRO's clinical guidelines are intended as tools to promote appropriately individualized, shared decision-making between physicians and patients. None should be construed as strict or superseding the appropriately informed and considered judgments of individual physicians and patients.

Additional Resources on Colorectal Cancers

Video: Radiation Therapy for Colon, Rectum and Anus Cancers; An Introduction to Radiation Therapy (Spanish)

Brochure: Radiation Therapy for Colon, Rectum and Anus Cancers

Chart: Side Effects of Colorectal Cancer Radiation Treatment

ABOUT ASTRO

The American Society for Radiation Oncology (ASTRO) is the world’s largest radiation oncology society, with more than 10,000 members who are physicians, nurses, biologists, physicists, radiation therapists, dosimetrists and other health care professionals who specialize in treating patients with radiation therapies. The Society is dedicated to improving patient care through professional education and training, support for clinical practice and health policy standards, advancement of science and research, and advocacy. ASTRO publishes three medical journals, International Journal of Radiation Oncology • Biology • Physics, Practical Radiation Oncology and Advances in Radiation Oncology; developed and maintains an extensive patient website, RT Answers; and created the nonprofit foundation Radiation Oncology Institute. To learn more about ASTRO, visit our website, sign up to receive our news and follow us on our blog, Facebook, Twitter and LinkedIn.

Newswise — Rutgers researchers have discovered human gene markers that work together to cause metastatic prostate cancer – cancer that spreads beyond the prostate.

The study, published in the journal Nature Cancer, explored prostate cancer cells from people and mice and found a wide collaboration among 16 genes that leads to metastasis, which often leads to treatment challenges.

The gene markers identified can predict if a prostate cancer patient has a high probability of developing metastasis, including bone.

Prostate cancer is the second leading cause of cancer-related deaths among men in the United States with a five-year relative survival rate of near 100 percent when diagnosed early. Metastatic prostate cancer has a five-year survival rate of 30 percent. Current therapeutics like first- and next- generation anti-androgens that target male sex hormones alongside radiation, chemotherapy and others are not always effective, and it’s impossible to predict which patients are at risk of developing the advanced late stage of the disease.

“People diagnosed with prostate cancer should now be screened for the protein markers discovered to help determine their risk of developing metastatic prostate cancer, which can help inform more personalized therapy,” said Antonina Mitrofanova, an assistant professor at the Rutgers School of Health Professions and research member at Rutgers Cancer Institute of New Jersey. “Our results show that molecular profiling at the time of diagnosis can help inform more personalized therapy leading to better outcomes for those with this advanced form of disease.”

Researchers say testing for these gene markers can also predict which patients will fail to respond to normally used androgen targeting therapies in metastatic disease and can decrease multiple treatment rounds for patients.

Researchers, in collaboration with Cory Abate-Shen’s lab at Columbia University, have obtained a patent for their discovery and are looking to develop therapeutics and diagnostic tools.

Newswise — Taking into account two common kidney disease tests may greatly enhance doctors’ abilities to estimate patients’ cardiovascular disease risks, enabling millions of patients to have better preventive cardiovascular care, according to a large international study co-led by researchers at the Johns Hopkins Bloomberg School of Public Health.

The researchers used data from more than nine million individuals around the world to develop and validate a risk-scoring calculation that adds blood and urine measures of kidney disease to the current standard method in the United States for assessing cardiovascular disease risk. The two measures—estimated glomerular filtration rate and urine albumin—are commonly used to reveal chronic kidney disease. CKD, as it’s called, has long been considered a risk factor for cardiovascular disease, although until now CKD-related measures have not been included in standard algorithms for quantifying cardiovascular disease risk.

The researchers showed that the use of their “CKD patch”—a computer-program update—can result in large increases in cardiovascular disease-risk estimates among patients with severe CKD.

The investigators also developed a similar patch to enhance the standard risk-assessment tool used in Europe.

The study appears October 14 in EClinicalMedicine, a new online open-access journal published by The Lancet.

“Adding these two measures of kidney disease, which are frequently available from blood and urine tests at checkups, allows potentially big improvements in the accuracy of a patient’s risk estimates—improvements that should in turn enable doctors to optimize patient care,” says study co-first author Kunihiro Matsushita, MD, an associate professor in the Bloomberg School’s Department of Epidemiology.

“This is a big deal—an estimated ten percent of the United States adult population has kidney disease and potentially would benefit from improved care if this new tool is adopted,” says co-last author Josef Coresh, MD, George W. Comstock Professor in the Department of Epidemiology at the Bloomberg School.

The other co-first author was Simerjot Jassal, MD, of the University of California, San Diego, and the other co-last author was Elke Schaeffner, MD, of Charité University Hospital Berlin. Shoshana Ballew, PhD, assistant scientist in the Bloomberg School’s Department of Epidemiology, helped coordinate the data-gathering. In all, the study included more than 50 researchers.

The reduction of kidney function in CKD can lead to higher blood pressure as well as hormonal and other chemical imbalances, and these in turn promote the narrowing of arteries that supply the heart muscle—conditions known as atherosclerosis and arteriolosclerosis. The American Heart Association and the American College of Cardiology, in their guidelines for physicians, already list CKD as a “risk enhancer” for atherosclerotic cardiovascular disease, but without a specific tool that quantifies the added risk as part of the standard risk calculator.

Since 2009, Coresh, Matsushita, and colleagues have been assembling a large, international database of CKD patients and healthy adults, under a collaboration known as the CKD Prognosis Consortium. For the new study, they analyzed a portion of this database, covering 4.1 million adults around the world, to develop algorithms that estimate cardiovascular disease risk using standard measures plus the two kidney-disease measures. They then validated the accuracy of their algorithms using further samples covering 4.9 million adults.

The two kidney disease measures, estimated glomerular filtration rate and urine albumin, respectively, indicate the kidneys’ blood-filtering efficiency and the level of an essential protein called albumin that the kidneys normally would filter out of the urine.

The researchers incorporated these measures in a “CKD patch” to the standard cardiovascular disease-risk estimation algorithm developed by the American Heart Association and the American College of Cardiology. They found that for adults who had results on these kidney- disease tests indicating CKD, the addition of these measures via the CKD patch significantly improved the estimated 10-year risks of atherosclerotic cardiovascular disease.

For example, for patients with “very high-risk” CKD, the estimated 10-year chances of developing atherosclerotic cardiovascular disease were a median of 1.55 times higher than estimates without the CKD patch, while the figures were a median of 1.24 times higher for “high-risk” CKD patients.

The researchers’ CKD patch for the standard European 10-year cardiovascular disease mortality risk estimator also boosted estimated risks, by a median of 2.64 times in very high-risk CKD patients, and 1.86 times in high-risk CKD patients.

“These results suggest that doctors have tended to underestimate cardiovascular disease risks in kidney disease patients,” Matsushita says.

The researchers hope that their CKD patches will be adopted widely, enabling more accurate assessments of cardiovascular disease and related mortality risks—which in turn should result in better preventive care including the use of statins and other interventions to ward off cardiovascular disease.

“We also hope that the availability and value of these new algorithms will encourage doctors to order estimated glomerular filtration rate and urine albumin tests for their patients more often,” Coresh says.

The CKD patches are available online at: http://ckdpcrisk.org/ckdpatchscore/ and http://www.ckdpcrisk.org/ckdpatchpce/

The study, “Incorporating kidney disease measures into cardiovascular risk prediction: Development and validation in 9 million adults from 72 datasets,” was funded by the National Kidney Foundation and the National Institute of Diabetes and Digestive and Kidney Diseases.